- Blog

- Lil uzi vert album download

- Html5 css3 y javascript anaya pdf descargar 2014

- Apple phone emulator for mac

- Tomtom gps cracked apk

- Standard deviation matlab

- Mahamantra hare rama hare krishna mp3 free download

- Esi Tronic 2013 2Q Keygen

- How to create android emulator in mac

- Blaupunkt software update tv

- Spider man 2 2004 pc game download

- Free mac cleaner 2016

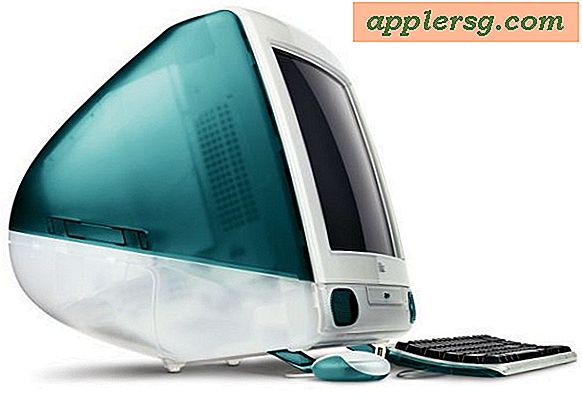

- Mac os x tiger emulator

- Swathi chinukulu serial

- Best browser for mac 10-5 8

Can't always look at something they're doing now and assume that if they were greenfielding it they'd do the exact same thing again. That’s what happens with real-world languages and libraries: the more users you have, the fewer breaking changes you can make."įor Apple same definitely turns up in hardware once in a while too, though of course they try to be careful and have a lot of institutional knowledge about pitfalls by this point. These are all things that would be hard to change in Swift today, because they’d break tons of people’s code. This list is something I started collecting around when I left Apple, and I’m putting them up so other language designers can learn from our mistakes. I’m at least partly responsible for many things people like about Swift and many things people hate about Swift. > "I worked on Swift at Apple from pre-release to Swift 5.1. For example, just a few weeks ago HN had a sizable thread on "Swift Regrets" by Jordan Rose: Even a vertically integrated company on their scale is not completely immune to the challenges of tight coupling or thinks of it all ahead, and there are definitely decisions Apple has made that they regret but can't easily get out from under. For a hardware company with necessarily very long lead times (they can't exactly start having chips fabbed and making electronics two weeks before launch) Apple runs a pretty hectic schedule with major new launches every single year. Sometimes there just isn't any master plan, some decision made years earlier has created a dilemma due to dependencies built on it since and there just isn't the resources (or ROI, particularly without any spec compatibility to worry about) to deal with it right then. Keep in mind too that "requirements at hand" can include a significant degree of path dependency, and that it can be a mistake to read too much reasoning into something too. > As much as many of us want to attribute positive or negative reasons or motivations to things companies do - especially secretive companies like Apple - it's a nice reminder that most decisions are made without malice, because they make the most sense based on the requirements at hand.

#Mac os x tiger emulator mac#

Obviously there's still a lot to be done to support everything to the same level as, say, a Dell XPS machine, but the progress made so far is pretty amazing, and even though I have no plans to buy an M1 Mac any time soon, I'm always excited to read these updates.

#Mac os x tiger emulator software#

Having control over the entire hardware and software ecosystem means that there's no reason to follow standards to the letter when those standards get in your way and make things harder, more expensive, or even just not possible.Īnyhow, just wanted to finish by saying all of this work is truly impressive. Thanks for the even-handed reply, which I imagine is much easier to have after diving into all this stuff first-hand (I assume you're the same 'marcan' who wrote the progress report).Īs much as many of us want to attribute positive or negative reasons or motivations to things companies do - especially secretive companies like Apple - it's a nice reminder that most decisions are made without malice, because they make the most sense based on the requirements at hand. but cynically (or perhaps just realistically), I can easily believe that this isn't done for reasons of openness, but because this makes maintenance of macOS itself easier for Apple.

#Mac os x tiger emulator drivers#

> However, Apple is unique in putting emphasis in keeping hardware interfaces compatible across SoC generations – the UART hardware in the M1 dates back to the original iPhone! This means we are in a unique position to be able to try writing drivers that will not only work for the M1, but may work –unchanged– on future chips as well. Which is certainly their prerogative, but it just makes me think less of them. Otherwise it just seems like Apple does these things in order to make it harder for other OSes to run on their hardware. Stuff like this makes me wonder: why does Apple do this? If I try to give them the benefit of the doubt, I can assume that these changes are done for performance, cost, power-saving, or maybe even security reasons. In addition, it is managed by an ASC, the “ANS”, which needs to be brought up before NVMe can work, and that also relies on a companion “SART” driver, which is like a minimal IOMMU. The NVMe hardware in the M1 is quite peculiar: it breaks the spec in multiple ways, requiring patches to the core NVMe support in Linux, and it also is exposed as a platform device instead of PCIe.